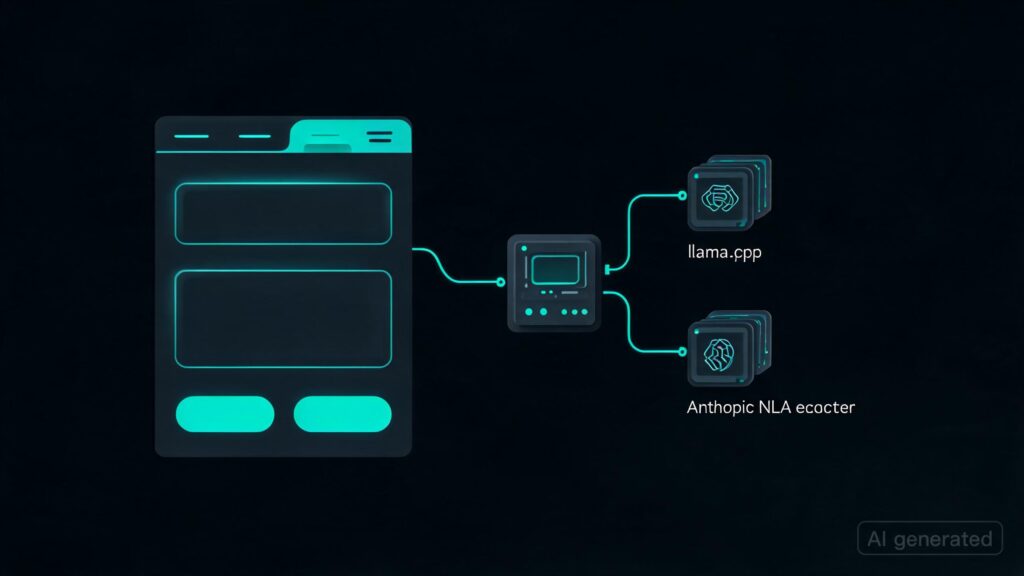

I made a UI and server for using Anthropic‘s new Natural Language Autoencoders locally with llama.cpp. This project encapsulates all features related to NLAs, such as activation extraction, explanation, reconstruction, and editing control, into a custom llama.cpp server. A Mikupad UI is also provided for token-level activation explanations and steering.

This work is significant because it simplifies the process of working with Anthropic’s recent models by integrating them seamlessly within existing infrastructure like llama.cpp. By creating this custom server and frontend, users can now perform more advanced tasks such as explaining model activations in detail and even modifying those activations to see how different inputs would be processed.

– Simplified interaction with Anthropic’s latest models.

– Enhanced user experience through a tailored UI for activation explanations and edits.

– Facilitates further research and development by providing a robust, integrated solution.

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.