“`html

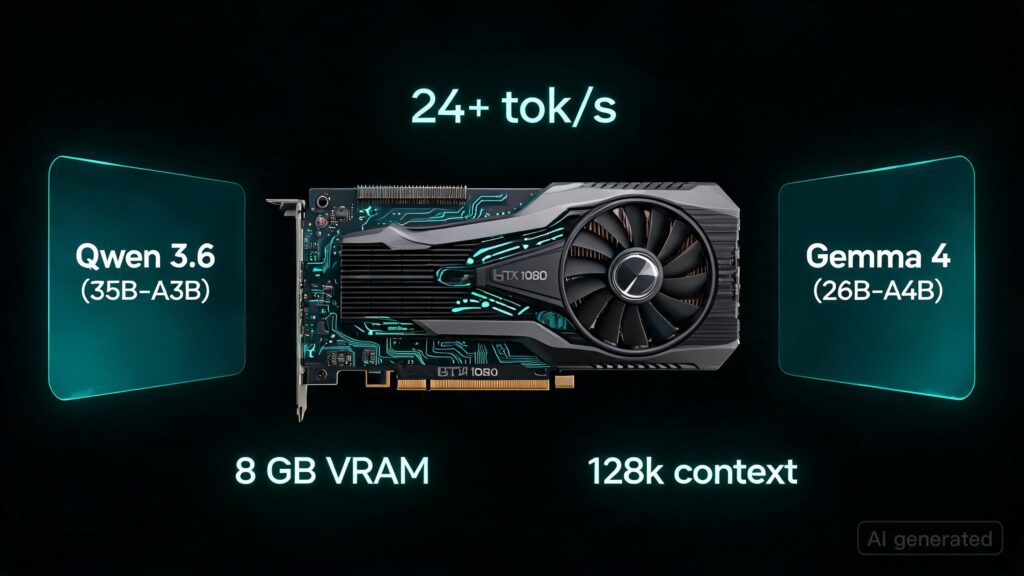

I recently ran two models with a substantial number of parameters—Qwen 3.6 (35B-A3B) and Gemma 4 (26B-A4B)—on an older GTX 1080 graphics card, which has only 8 GB VRAM. To achieve this, I used the llama.cpp library with a custom configuration to fit within the available memory constraints.

The key finding was that forcing the token embedding table onto the GPU with the flag --override-tensor-draft "token_embd\.weight=CUDA0" resulted in significant speed improvements. Without this adjustment, Gemma 4’s MTP (model training procedure) introduced a bottleneck due to its reliance on keeping the embedding table on CPU.

- The trick was offloading cold expert weights into system RAM and streaming them over PCIe to the GPU while keeping hot layers and the key-value cache on the GPU. This setup maximized the use of available memory and computational resources.

- Forcing the token embedding table onto the GPU with a specific flag enabled the model to avoid this performance bottleneck, leading to substantial speed improvements.

- The overall process required pinning an NVIDIA build to a specific branch (Pascal is going legacy), forcing GCC version 14 for CUDA 12.9 compatibility, and patching CUDA’s math_functions.h to ensure glibc 2.41 compatibility. These steps were necessary to support the GPU setup.

“`

“`

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.