Hey everyone!

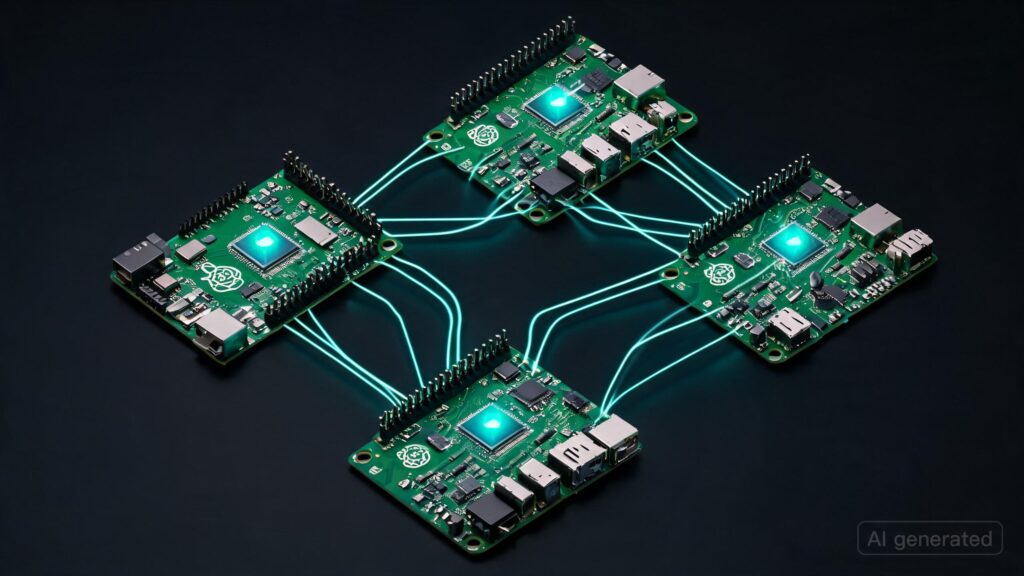

Recently, I released a blog on how to set up a cluster out of your Mac Minis for distributed training and inference

Now it’s time to do the same with Raspberry Pis!

- Quite cheap (30-50 dollars)

- Easy to use

- Full blown OS the size of a credit card (small enough for edge projects)!

This is part of my current series where I’ll be releasing blogs and guides around learning distributed learning and building your own small compute clusters.

The goal is simple: help more people get started with running and training AI models using the hardware they already have lying around. Old laptops, MacBooks, Mac minis, Jetson Nanos, Raspberry Pis, even phones and tablets.

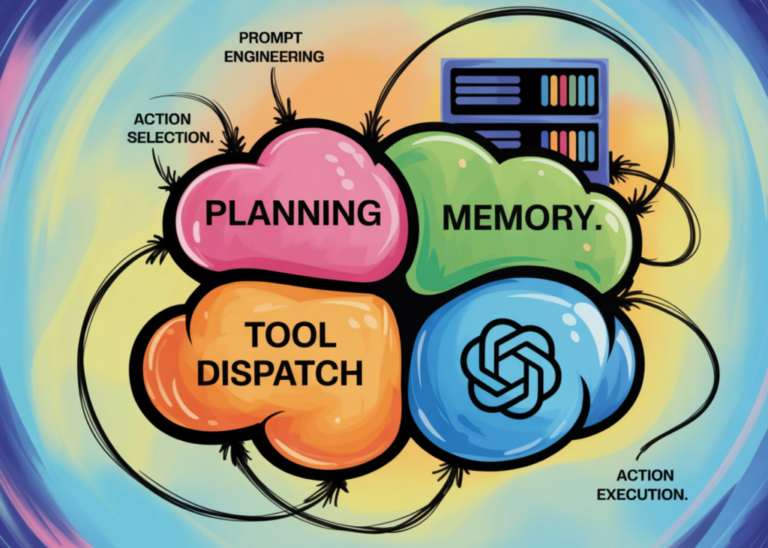

Distributed learning often feels intimidating from the outside, but it’s genuinely one of the coolest areas in systems and AI once you start playing with it yourself.

Before we get into the fun stuff like distributed inference and training, the first few posts will focus on setting up hardware properly and building a working cluster environment. Basically a subtle amount of cabling and networking!

- MacBooks and Mac minis (Done!)

- Jetson devices

- Raspberry Pis (This one hehe)

After that, we’ll move into quick demos (smolcluster), and gradually learn the fundamentals side-by-side while actually running models across devices.

I’m building this alongside smolcluster, so a lot of the content will stay very hands-on and practical instead of purely theoretical.

Hopefully this helps more people realize that distributed AI systems are not something reserved only for giant datacenters anymore.

There is just one question I want to answer: are heterogenous clusters like what I’m trying to make possible for running models?

- Well, we’ll know and until then do read my blog and let me know what you all think! Any comment, feedback etc are very welcome. (pls be gentle since it’s my first time writing one all by myself haha)

Blog

Hail LocalAI!

PS: All this is for educational purposes only and not meant for getting performance at par with dedicated GPUs…well not that I have figured out a way to do it yet. Please use these guides and information you’ll get to learn the basics of how distributed learning is done! Thanks