“`html

Summary of Changes Made to the Language Model Stack

About three months ago, we were manually selecting which model to use for each task. This involved testing various prompts, comparing outputs from different providers, and making adjustments as needed. While this approach worked initially, it proved unsustainable due to its lack of scalability.

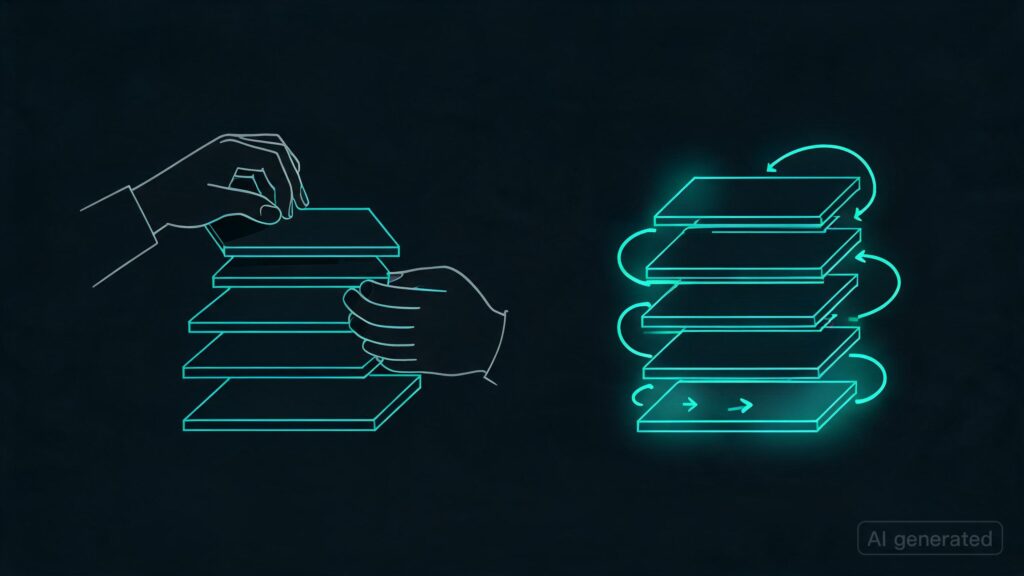

The Feedback Loop Solution

- We implemented a feedback loop where every request was traced with detailed information including input, output, model used, token count, cost, latency, and a quality score.

- Using embeddings, the system clustered similar requests based on their characteristics. This allowed it to identify which models performed best for each specific task or set of tasks.

- The router then fine-tuned a 7B parameter language model (LM) specifically for our workloads—classification, tagging, and summarization. This LM was found to be 95% accurate with GPT-5.1 but at only 2% of the cost compared to running multiple models.

- This approach worked so well that after just three weeks of collecting traces, we had enough data points to fine-tune our model and it began performing significantly better than before.

Improvements Over Time

- The system continued to learn from its own operations. After changing nothing in the configuration for a month, we observed another 12% reduction in costs as the router made more informed decisions based on real-world performance data.

- Additionally, hallucination detection ran automatically every response; any outputs deemed incorrect were flagged and used as negative examples to improve future training rounds, while correct responses became positive training data.

- As a result, the system improved continuously. With more traffic leading to more traces, our routing decisions became even better, resulting in lower costs per request—reducing from $420/month in month 1 to still dropping below $73/month in month 4.

Key Takeaways

- The manual optimization process was unsustainable due to scalability issues.

- A feedback loop with detailed tracing and clustering led to a more efficient, self-improving language model stack.

- The system’s ability to learn from its own operations resulted in significant cost savings without any additional manual tuning or resource investment.

Anyone else experiencing success with building similar self-improving loops into their AI stack?

“`

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.