**Editorial Brief**

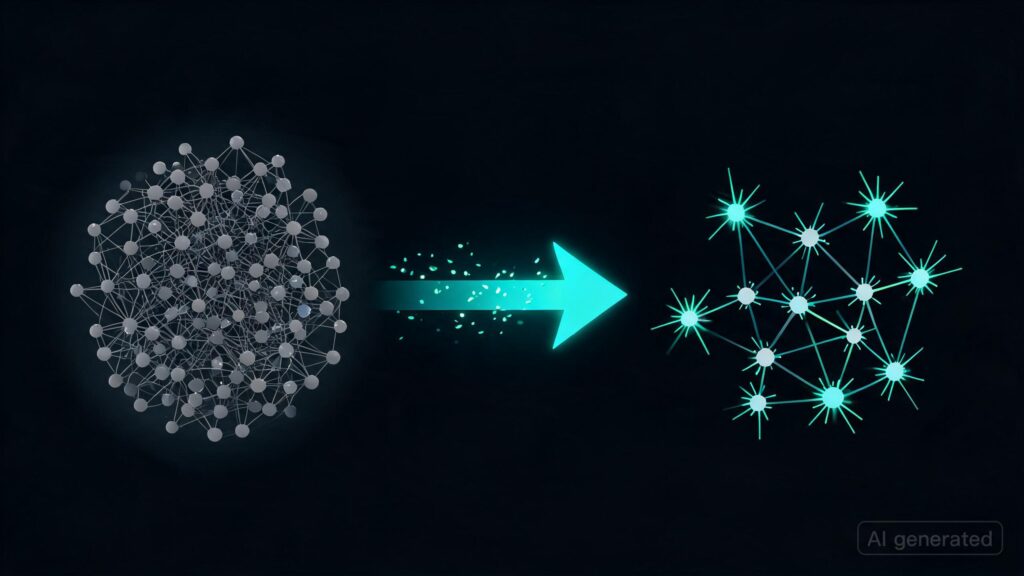

The recent post titled “converting weights to snn” on r/LocalLLaMA showcases an individual’s attempt at compressing a large-scale language model like GEMMA into a smaller, spiking neural network (SNN) architecture called SNN. The author proposes converting the 4 billion parameter Gemma model to one with 700 million parameters while maintaining functionality similar to a 2 billion-parameter transformer.

**Takeaways:**

– **Model Compression**: There’s interest in reducing model size without significantly compromising performance, which is crucial for deployment on resource-limited devices.

– **SNN Architecture**: The author suggests using an SNN architecture as a potential alternative for handling large-scale language models. This could be seen as innovative given the current focus on transformer-based models.

– **Experimental Nature**: The post highlights the experimental and speculative nature of this idea, inviting discussion about feasibility and implications for both research and practical applications.

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.