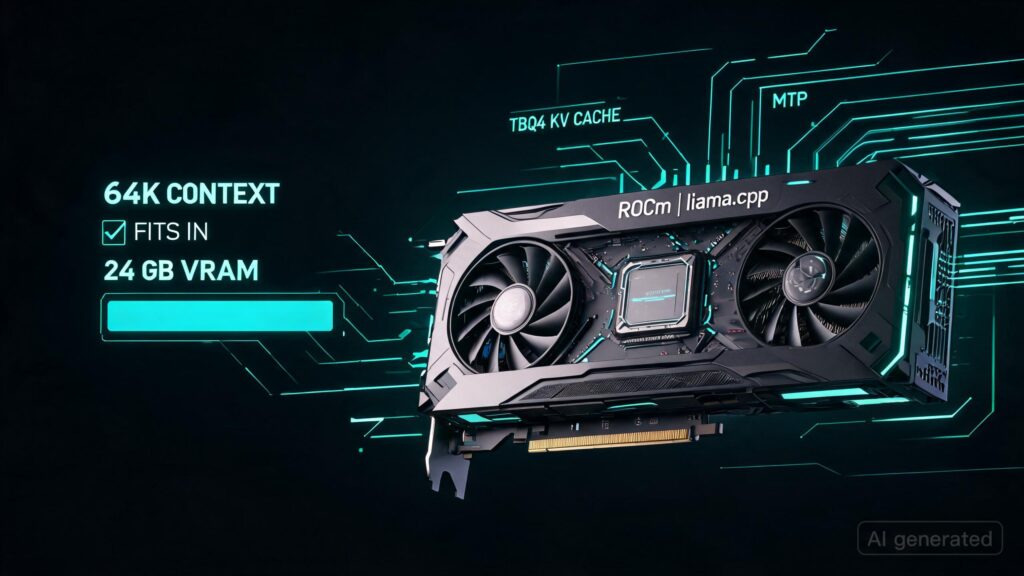

TL;DR: I got TBQ4 KV cache + MTP working on AMD ROCm for RX 7900 XTX / RDNA3 / gfx1100 in llama.cpp. Main win: 64k context fits on 24 GB VRAM and remains usable.

Branch: tbq4-rdna3-experiment (https://github.com/DrBearJew/llama.cpp/tree/tbq4-rdna3-experiment)

I dug into TurboQuant / TBQ4 + MTP on AMD because the existing AMD paths were incomplete or broken for my setup. This branch uses the ROCm VEC Flash Attention path with inline TBQ4 dequant.

Test setup:

– RX 7900 XTX, 24 GB

– RDNA3 / gfx1100

– ROCm / HIP

– Qwen3.6-27B Q4_K_M MTP GGUF

– tbq4_0 KV cache

– MTP with –spec-draft-n-max 3

Current numbers:

– tbq4_0, 64k ctx: 38–54 tok/s, ~20 GB VRAM

– Prefill: 537.7 tok/s at 16k; 360.8 tok/s in the 64k test

– q8_0 baseline: ~49.8 tok/s at 16k, ~31 tok/s at 32k, ~22–23 GB VRAM

Caveats:

– RX 7900 XTX is RDNA3 / gfx1100, not RDNA3.5.

– RDNA3.5 / RDNA4 are enabled but untested.

– RotorQuant / PlanarQuant / IsoQuant are present but not validated.

– These are reported points from separate runs, not a clean scaling curve.

Happy for New Testers.

Useful bug reports > hype.

submitted by /u/DrBearJ3w

[link] [comments]

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.