“`html

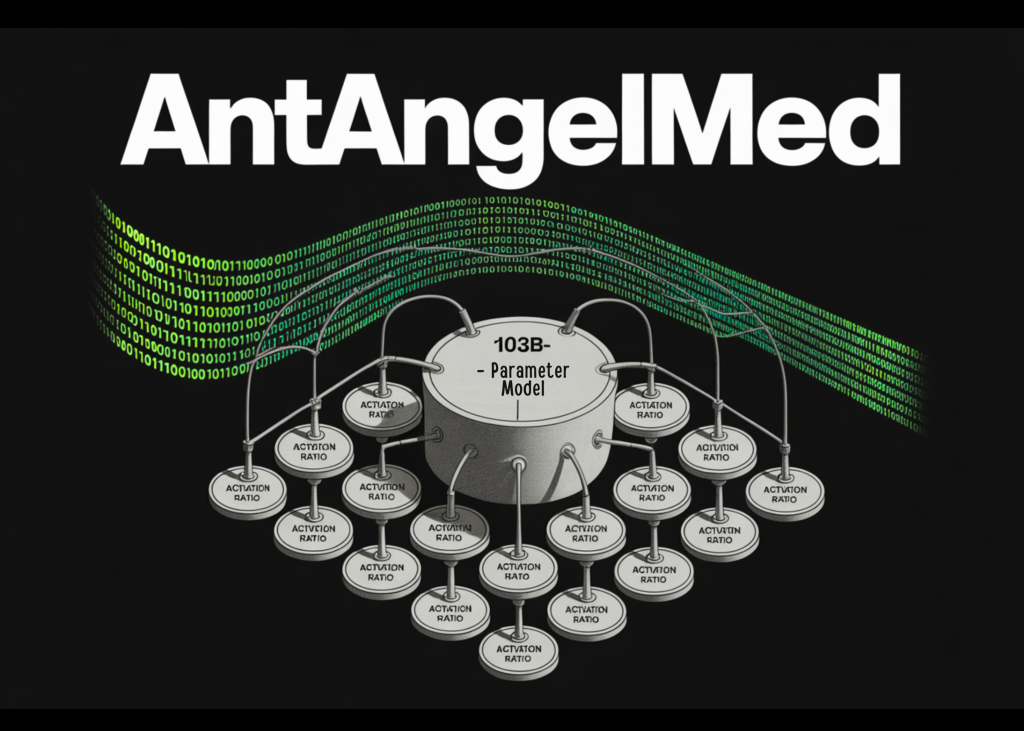

Meet AntAngelMed: A 103B-Parameter Open-Source Medical Language Model

What Is AntAngelMed?

AntAngelMed is a large-scale medical-domain language model with 103 billion total parameters. However, it activates only 6.1 billion parameters during inference through a Mixture-of-Experts (MoE) architecture with a 1/32 activation ratio. This design allows for efficient computation while maintaining strong knowledge capacity.

Training Pipeline

The model undergoes a three-stage training process:

- Stage 01: Continual Pre-Training – The model is pre-trained on large-scale medical corpora including encyclopedias, web text, and academic publications. This phase builds the foundational general reasoning capability.

- Stage 02: Supervised Fine-Tuning (SFT) – The model is fine-tuned with a multi-source instruction dataset that includes both general tasks like math and programming as well as medical scenarios such as doctor–patient Q&A, diagnostic reasoning, and safety/ethics cases.

- Stage 03: Reinforcement Learning via GRPO – The model is further refined using the Group Relative Policy Optimization (GRPO) algorithm. This stage uses task-specific reward models to encourage the model to behave in ways that are empathetic, structurally clear, safe, and evidence-based.

Inference Performance

On H20 hardware, AntAngelMed achieves a throughput of over 200 tokens per second. This is approximately three times faster than a comparable 36 billion parameter dense model. When combined with the EAGLE3 speculative decoding optimization using FP8 quantization, the inference throughput improves significantly across various benchmarks:

- HumanEval: +71%

- GSM8K: +45%

- Math-500: +94%

The model supports a context length of 128K, which is sufficient for handling full clinical documents and extended patient histories.

Benchmark Results

- HealthBench: AntAngelMed ranks first among all open-source models, outperforming proprietary top models particularly in the HealthBench-Hard subset.

- MedAIBench: It ranks at the top level with strong performance across medical knowledge Q&A and medical ethics/safety categories.

- MedBench: AntAngelMed is the first-ranking model overall, demonstrating its comprehensive capabilities in various areas of healthcare language models.

MarkTechPost’s Visual Explainer