“`html

How to Build a Cost-Aware LLM Routing System with NadirClaw Using Local Prompt Classification and Gemini Model Switching

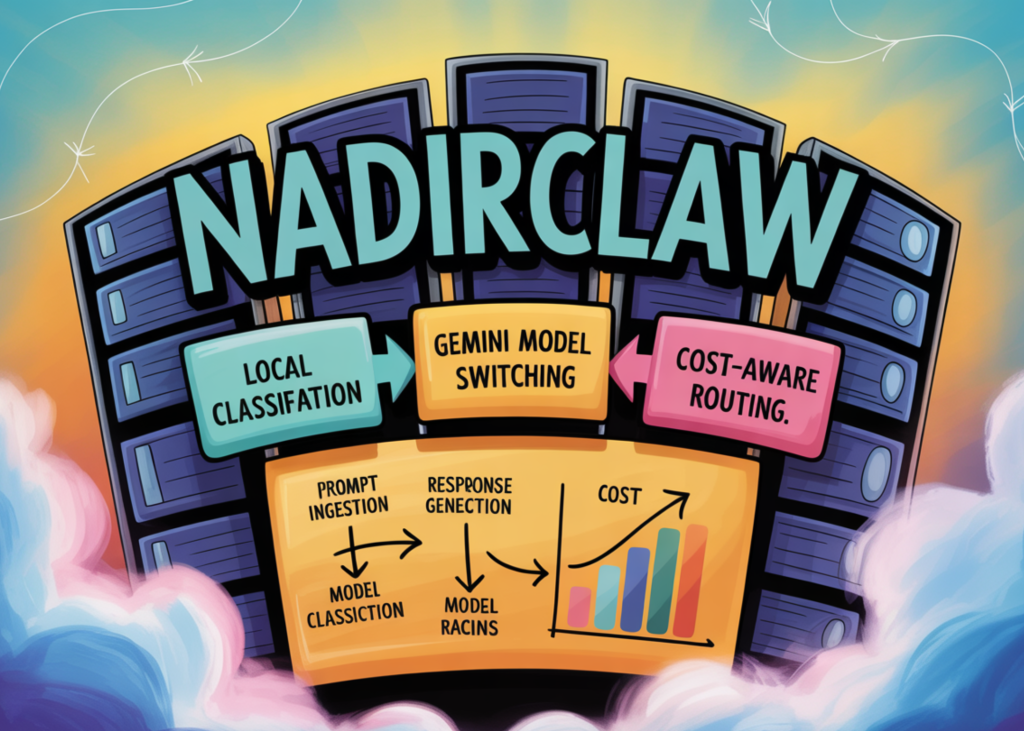

In this tutorial, we explore NadirClaw as an intelligent routing layer that classifies prompts into simple and complex tiers before sending them to the most suitable model. We start by installing the required packages, setting up an optional Gemini API key, and testing the local classifier through the NadirClaw CLI without making any live LLM calls. We then inspect the centroid vectors that power the routing decision, embed our own prompts, visualize how similarity scores separate simple and complex tasks, and experiment with confidence thresholds. After understanding the local routing logic, we move into live routing by launching the NadirClaw proxy server, sending OpenAI-compatible requests through it, comparing routed model behavior, and estimating cost savings against an always-Pro baseline.

We install NadirClaw and the supporting Python libraries required for routing, embeddings, plotting, API calls, and data handling. We then import all required modules and securely capture the Gemini API key through the environment or a hidden prompt. We also decide whether live routing sections should run, while still allowing the local classifier sections to work without an API key.

We define a reusable classify() function that sends prompts to the NadirClaw CLI and returns structured JSON results. We create a mixed set of simple and complex prompts, classify them, and display the routing tier, score, confidence, model, and prompt text in a table. We then load the simple and complex centroid vectors from the NadirClaw package and compare their shapes, norms, and cosine similarity.

We use the same SentenceTransformer encoder as NadirClaw and embed all tutorial prompts locally. We compare each prompt embedding against the simple and complex centroids, then visualize the routing boundary with a scatter plot. We also sort prompts by complexity score, test confidence thresholds, and inspect routing modifier examples for agentic, reasoning, and vision-style requests.

We start the NadirClaw proxy server on port 8856 in live mode if the Gemini API key is provided. The proxy will route requests to either a simple or complex model based on the classification results. We compare the behavior of these models against each other and estimate cost savings by comparing them with an always-Pro baseline.

Key Takeaways

- NadirClaw as an intelligent routing layer for LLMs using local prompt classification and model switching via Gemini API key.

- Simplified and complex prompts are classified based on their embeddings compared to centroid vectors.

- A live proxy server is launched to route requests, allowing comparison of models’ behavior against the always-Pro baseline.

- Cost savings can be estimated by comparing the cost of using different models for a given task.

“`

This HTML document contains the key points and structure of the original article with British English terminology.

Originally published at marktechpost.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.