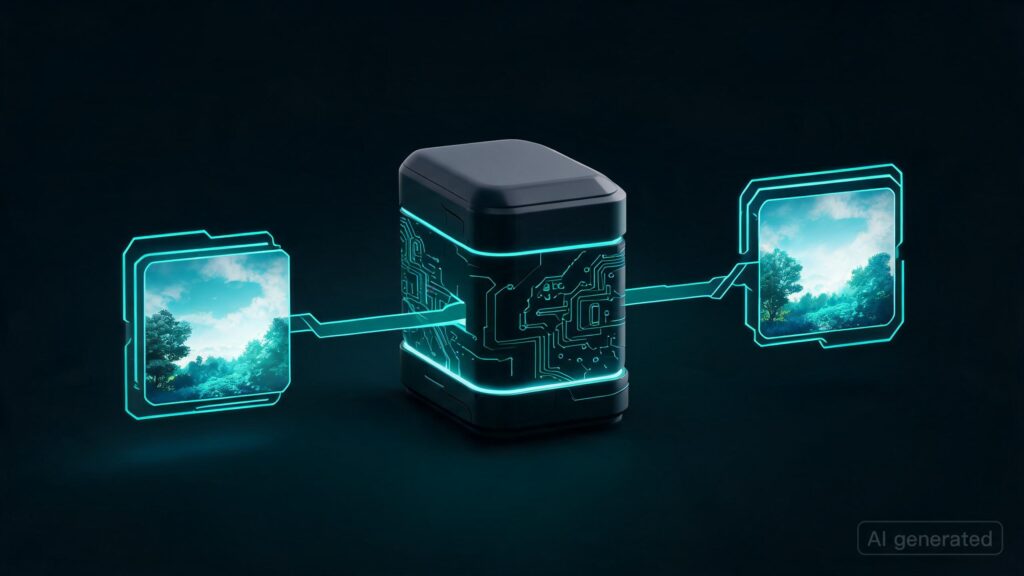

**What Happened:** A new series of multimodal models, **SenseNova-U1**, has been released by the SenseNova team. This model unifies visual understanding and generation within a monolithic architecture, marking a significant shift from previous approaches that relied on adapters for modality integration. The release includes multiple sizes (8B MoT and A3B MoT) with corresponding Hugging Face weights available.

**Why It Matters:** This unified multimodal approach opens up new possibilities in AI by allowing models to think and act across language and vision seamlessly. This is particularly impactful as it enables highly efficient and strong understanding, generation, and interleaved reasoning without the need for adapter mechanisms. The availability of different model sizes (8B MoT and A3B MoT) with various fine-tuning options also provides flexibility for a wide range of applications.

– **Unified Multimodal Understanding:** SenseNova-U1 breaks down barriers between language and vision, enabling more coherent and effective AI systems.

– **Efficient Reasoning:** By unifying modalities in one architecture, the model can perform reasoning across different inputs more efficiently than previous methods.

– **Versatile Model Sizes:** Offering multiple sizes allows users to choose the optimal balance between performance and resource usage for their specific applications.

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.