“`html

It’s Not All Training Anymore

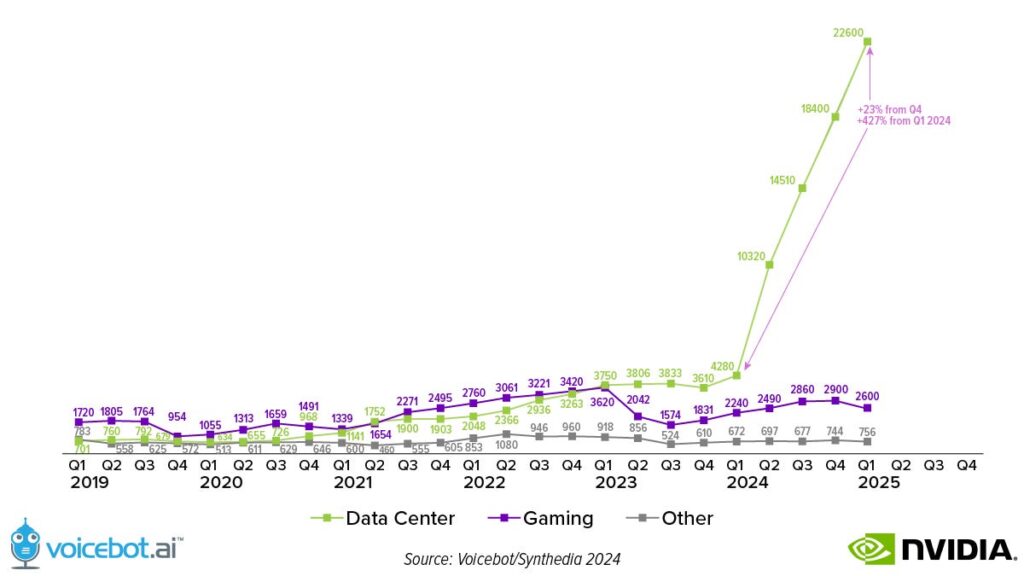

The company estimates that about 40% of its data center revenue was driven by inference. This rise occurred despite the 3x improvement in inference speed, which translated into a comparable price reduction. Inference is scaling in terms of applications, model complexity, users, and queries per user. However, the data also means that three-fifths of data center revenue is still driven by training AI models.

In Q1, NVIDIA worked with over 100 companies to build AI factories, including more than one that exceeded 100,000 GPUs. Automotive is expected to be the largest data center customer segment this year due largely to the rise in training for self-driving and other vision systems. Tesla expanded its NVIDIA cluster to 35,000 H100s in the quarter.

Rising Developer Adoption

NVIDIA’s secret strength goes beyond the hardware to developer adoption of its CUDA software. Total CUDA developers more than doubled over the past three years, rising from 2.5 to 5.1 million. Startups and GPU-based applications also more than doubled during the period.

The Engine Behind Model Advances

It is hard to overstate NVIDIA’s importance in the generative AI innovation curve. Accelerated computing and the voracious drive to reduce costs have made it possible for new and larger models to arrive in the market faster. This opens up access to new use cases for businesses and consumers and drives both technology trial and adoption.

Since GPUs are a precursor material for generative AI model training, you can look at NVIDIA sales as a leading indicator of industry growth. That will continue to be the case until capacity exceeds demand. When you see NVIDIA sales begin to decline, it will be a sign that the capacity shortage is coming to an end, and AI model training and inference costs are likely to start falling.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.