“`html

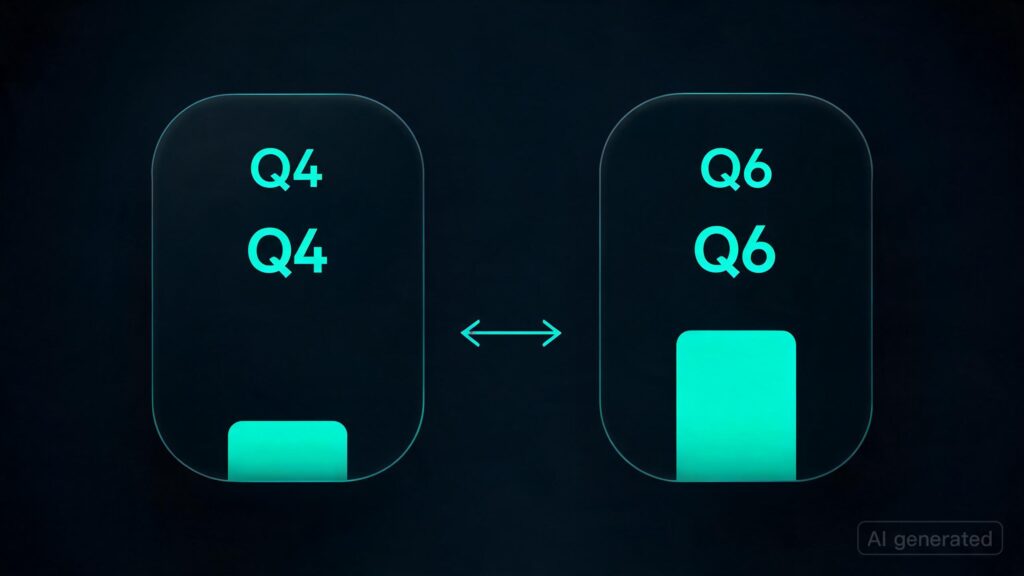

A user on Reddit asked about the performance difference between running Qwen 3.6 at different quantization levels (Q4 vs Q6).

The main point of discussion is whether there’s a noticeable improvement in performance or stability when using higher quantization levels, such as Q6, compared to the default Q4 setting.

- For those running Qwen 3.6 at Q4, an additional GPU might be beneficial for handling larger context windows and token generation rates.

- The user notes a noticeable difference in performance when switching from Q4 to Q6, especially with the addition of another high-performance GPU like a 3090.

- While some users report better stability at higher quantization levels, others find no significant difference and continue using Q4 for their needs.

- The Reddit post highlights the need for more empirical data to determine if performance improvements are worth the additional computational load.

- Users suggest testing different configurations in controlled environments before committing to a higher quantization level for production use cases.

- The discussion underscores the importance of understanding one’s specific use case and system limitations when deciding on GPU settings.

“`

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.

![Is RL post-training in ‘imagined environments’ a path to continual learning? Trying to understand this deeper [D]](https://ai-maestro.online/wp-content/uploads/2026/05/is-rl-post-training-in-imagined-environments-a-path-to-conti-768x432.jpg)