“`html

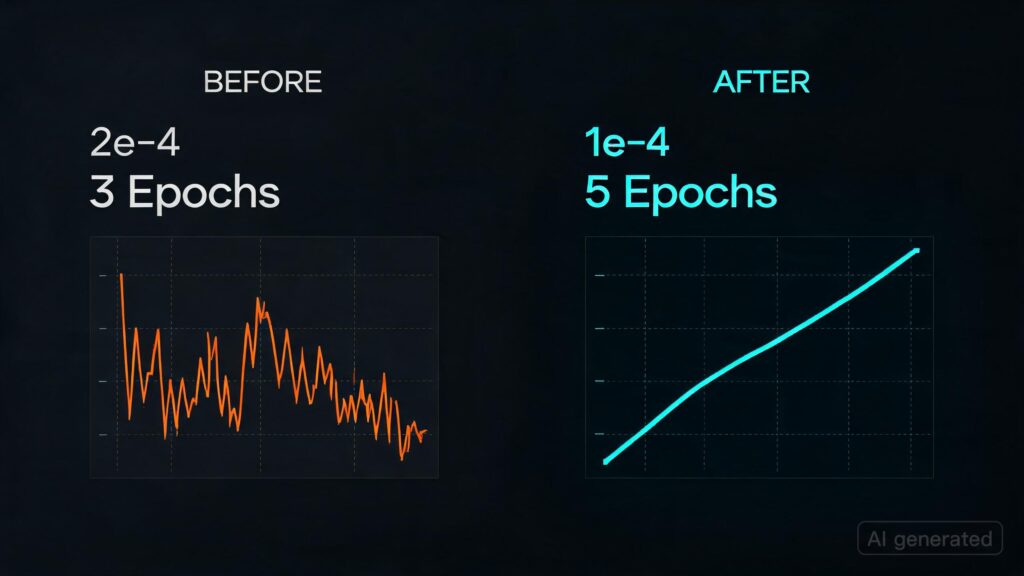

- A Reddit user reported that reducing the learning rate from 2e-4 to 1e-4 and increasing the number of training epochs from 3 to 5 improved their Qlora fine-tuning results significantly. They were previously struggling with poor evaluation metrics despite trying various data preprocessing techniques.

- Specifically, they noted that a learning rate of 2e-4 was too high for their dataset size (8k samples), leading the model to overfit in just one epoch and performing poorly thereafter. By lowering this to 1e-4, they allowed the model more time to converge without overshooting the optimal solution.

“`

### Takeaways

– Lowering learning rate from 2e-4 to 1e-4 can significantly improve Qlora fine-tuning results for smaller datasets.

– Increasing training epochs helped stabilize and enhance the model’s performance.

– Careful tuning of hyperparameters like learning rate is crucial, especially with limited data.

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.