Developing an Open-Source LLM from Ground Up

I have been working on creating a large language model (LLM) from scratch. It is based on the DeepSeek architecture with optimizations aimed at reducing the VRAM footprint.

Current Pre-training Setup

- Hardware: 2 x NVIDIA GeForce RTX 6000 Pro, each with a power of 600W

- The model currently under pretraining is a 7B parameter model. It employs 100% throughput on a single GPU with approximately 80GB VRAM.

- Reducing the expert count will substantially reduce the VRAM footprint, allowing for more experimentation and optimization.

Main Goal: My objective is to enable open-source development that can outpace proprietary models. I envision a large database of trained models available for anyone to use in the future. Additionally, there could be a feature where users can rent models from an open-source developer as a support service.

Data Splits and Training Strategy

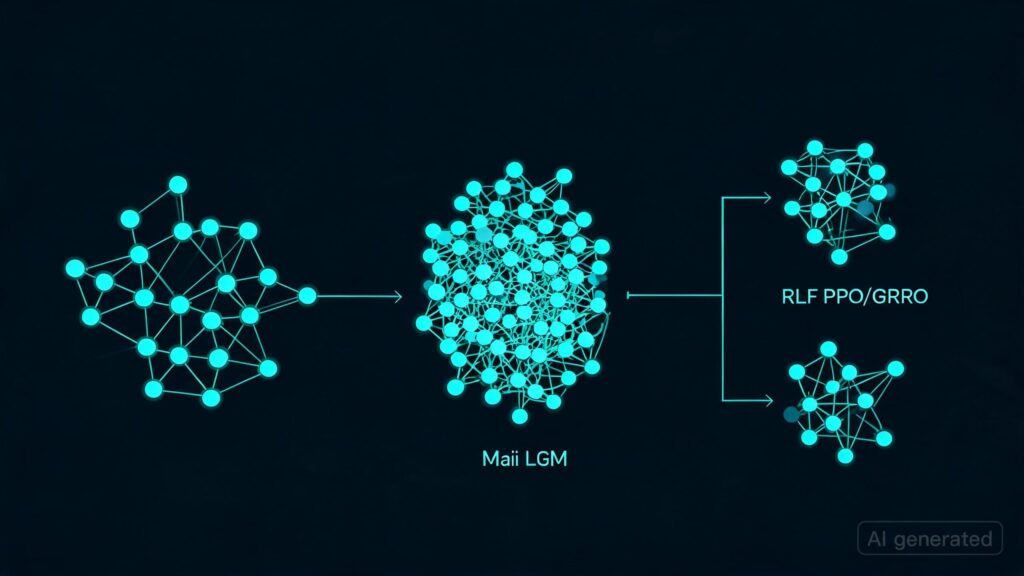

- I am using DOLMA (DeepMind Large Model Adapter) data splits and training them individually for specific domains like math, literature, physics. These individual models will then be deployed as agents in an ensemble system.

- The model is following the Chinchilla optimal architecture. Thank you to DeepMind for providing this guidance!

Technical Details:

- Training all layers and operations in bfloat16, with options to switch to fp16 or fp32 if available.

- I have encountered many setbacks during the development process but managed to get something working!

Progress Report:

This is 15000 steps in…

[FACTUAL ACCURACY TEST] Step 14000 Prompt: "The capital of France is" Output: "the city of Nice. France may also refer to: France (surname) France (surname) France (or Republic of France)" [EXPECTED: Paris][CORRECT]

[FACTUAL ACCURACY TEST] Step 14000 Prompt: "The capital of Japan is" Output: "the capital of the autonomous prefecture of Hokkaido. Etymology The name of Hokkaido is derived from Ainu words for 'elevated land'" [EXPECTED: Tokyo][SMBench] Step 14000 — 1/5: Multi-Rule Reasoning . . .

Model Architecture and Memory Optimization

- JSON Schema:

{

"experiment_name": "deepseek_v3_7b_lowvram",

"output_dir": "",

"seed": 420,

"model": {

"num_layers": 24,

"vocab_size": 50304,

"norm_type": "rmsnorm",

"norm_eps": 1e-06,

"tie_word_embeddings": false,

"init_method_std": 0.006,

"first_k_dense_replace": 8,

"dense_layer_interval": 1,

"paper_compliant": false,

"mla": {

"d_model": 1408,

"d_latent": 352,

"num_heads": 22,

"num_kv_heads": 2,

"max_context_length": 4096,

"use_flash_mla": false

},

"moe": {

"num_experts": 64,

"num_experts_per_token": 4,

"expert_intermediate_size": 1536,

"expert_dim": 1536,

"dropout": 0.0,

"num_shared_experts": 1

},

"fusions": {

"use_fused_expert_ffn": true,

"use_te_fused_topk": false,

"use_te_fused_permute": false,

"use_fused_softmax": true,

"fused_softmax_in_fp32": true,

"use_group_limited_topk": true

},

"memory_optimization": {

"use_galore": false,

"galore_rank": 256,

"galore_update_proj_gap": 500,

"galore_scale": 1.0

},

"training": {

"device": "cuda",

"global_batch_size": 256,

"micro_batch_size": 4,

"gradient_accumulation_steps": 64,

"seq_length": 1024,

"max_batch_seq_multiplier": 1.25,

"tokens_per_parameter_ratio": 40.0,

"total_training_tokens": 280000000000,

"learning_rate": 0.00042,

"min_learning_rate": 4.2e-05,

"lr_preset": "deepseek_v3"

},

"data": {

"use_multi_source": true,

"sources": [

{

"name": "redpajama",

"type": "dolma",

"subset": "dolma_v1_6_redpajama",

"weight": 0.45,

"description": "RedPajama - CommonCrawl-like diverse web/code/books"

},

{

"name": "stack",

"type": "dolma",

"subset": "dolma_v1_6_stack",

"weight": 0.25,

"description": "Stack Overflow - Q&A in English"

}

],

"cache_dir": "",

"sanitization": {

"enabled": true,

"target_language": "en",

"min_language_confidence": 0.9,

"min_article_length": 100

},

"preprocessing": {

"num_workers": 8,

"shuffle": true,

"shuffle_seed": 42

},

"max_articles": null,

"focus_historical": false,

"boost_hiroshima_content": false

},

"distributed": {

"backend": "nccl",

"launcher": "single_gpu",

"tensor_parallel_size": 1,

"pipeline_parallel_size": 1,

"expert_parallel_size": 1,

"data_parallel_size": 1,

"zero_stage": 2,

"zero_offload": true,

"overlap_grad_reduce": true,

"overlap_param_gather": true

},

"checkpointing": {

"save_interval": 1000,

"save_total_limit": 3,

"resume_from_checkpoint": null,

"checkpoint_format": "pytorch",

"save_optimizer_states": true

},

"logging": {

"log_level": "INFO",

"log_interval": 100,

"tensorboard_dir": "",

"wandb_enabled": false,

"tensorboard_enabled": true

},

"validation": {

"enabled": true,

"eval_interval": 1000,

"eval_samples": 500,

"metrics": ["loss", "perplexity"],

"patience": 300,

"early_stopping": false

},

"profiling": {

"trace_nvtx": false

},

"gpu_optimization": {

"cuda_graphs": true,

"torch_compile": true,

"flash_attention": true,

"fused_kernels": true,

"autocast_dtype": "bfloat16"

}

}

}

Current Status:

- I have achieved a throughput of approximately 20 seconds per step on a single GPU with the current configuration.

- To summarize, I am using DeepSeek architecture and applying various optimizations to reduce VRAM usage. The model is currently training a 7B parameter model with 64 experts for a total of 80GB VRAM.

Next Steps:

- I need to ensure that the model can run smoothly and efficiently before making it publicly available. This involves addressing any remaining issues, such as memory optimizations and ensuring all components are working correctly.

- I am also considering how best to make this open-source initiative successful by establishing guidelines for contributors and ensuring that models developed using this framework remain accessible and transparent.

Key Takeaways

- The model is currently training a 7B parameter LLM with optimizations aimed at reducing VRAM usage.

- I am planning to make the codebase public once it has been thoroughly tested and optimized for maximum efficiency.

- There are plans in place to establish guidelines for contributors to ensure that all models remain accessible and usable by the broader community.

If you have any specific questions or suggestions, please let me know!

Originally published at reddit.com. Curated by AI Maestro.

Stay ahead of AI. Get the most important stories delivered to your inbox — no spam, no noise.

![Is RL post-training in ‘imagined environments’ a path to continual learning? Trying to understand this deeper [D]](https://ai-maestro.online/wp-content/uploads/2026/05/is-rl-post-training-in-imagined-environments-a-path-to-conti-768x432.jpg)